Sorry if this has been covered previously. It likely has. But why is it possible for a recipe author to rate their own recipes? Sometimes I like to sort by rating, but there are so many one-review 5 star ratings (almost all from the authors themselves I’m sure) that it makes it a little irritating. I assume people are self-rating to improve search results, but it’s kind of frustrating. This is not a ‘complaint’, just a curiosity for me.

This has been discussed many times before.

Authors can rate their own recipes, because they have an opinion of them - and for those who have LOTS of recipes, their own rating as well as notes help them remember what they thought of their creation. Although most do, not all authors rate their own 5 stars, or rate their own at all. It doesn’t skew ratings (much) because they have one vote…

OK, understood. That’s definitely a site leader decision to make. I’m personally not a fan because it can be used as self-promotion to increase the chances of appearing in a search, and probably mostly is. I figure, if I publish a recipe I probably feel it’s a pretty good one so I’d never rate them myself. I’m totally cool with your decision of course, and have pretty much learned to bypass the one-review 5 stars when searching.

I suppose it’s too much effort to be worth it, but how about a search filter where you could squelch the recipes with only one rating to eliminate the noise? Probably a silly idea.

You could always click on rating and it will order recipes from highest to lowest rated. Taste is subjective and personally ratings mean nothing to me. You can really find some 1 star recipes that are great! Just look at the recipe and percentages used and you can pretty well see if it will be a decent mix.

The rating system is one of the biggest problems on the ELR recipe site, right behind the ability for any zero day mixer to arbitrarily add duplicate / misspelled flavors to the db. More troublesome than self rating, which to me isnt really a problem, are the hordes of people that seem to flock to certain mixer’s recipes and, despite it only having been 10 minutes since the recipe was posted and despite the fact that the recipe calls for a 3 week steep, they give it a 5 star review. It is also interesting to see that they then downvote other top recipes for some strange reason, its almost as if they are trying to game the rating system.

If I may also add other than ratings.

I think a better way to assess the quality of a recipe is the credibility of the mixer.

I think you will find there are very talented mixers that have numerous followers, because they regularly produce excellent recipes.

I also believe that you can not just look at not one recipe from a mixer.

But need to review their entire portfolio of mixes.

What I have found, is I have a very similar taste palate to some DIYrs.

And a few very talented mixers I have no interest in looking over what they are making.

Because they generally do not produce flavor profiles that fit my palate (Tobacco, Menthol, etcetera).

Using the duplicate checker is a great way to help Daath as well as the moderators try and clean up the database, you just have to be fairly careful that the real flavor you choose is the correct one, even wrong names can sometimes have a lot of ratings. I think a thumbs up/thumbs down approach would be really neat as well as when you hover over the thumb, it would tell you ‘so and so liked this’ much like the ‘likes’ used on our posts here in the forum. Everyone is going to have different views on the rating system, which is understandable.

It’s that whole ‘gaming the system’ thing that I’m talking about. It’s one thing for the site leaders to determine how things are presented, but it’s another thing altogether for the community to skew results. There’s virtually nothing that can be done, at least not in an automated fashion, about 5-starring long steep recipes as soon as they are published, of course. I know that prior to actually becoming a member, I did tend to sort by reviews like I do on something like Amazon, and I know devious members are well-aware of this.

I didn’t intend for this to become any sort of heated discussion, especially since this topic has apparently been discussed at length, so I apologize for re-hashing it all. I was just curious initially.

No, you’re ok, and it’s definetly ok to talk about these things, community input is what drives and shapes.

I quickly realized there’s not a whole lot that can be done about this. For folks like you guys who have been here a long time, you are used to the system and how it operates. But for non-member recipe hunters and noobs like me, these system-gamers can really have an impact because we don’t realize that results can be skewed. If I had to guess, I’d say maybe 50% of the high-rated or popular recipes don’t truly deserve it. It takes a while to learn who’s a great mixer and who’s a gamer.

In case you’re wondering, I’m not anywhere near ready to call myself a mixer worthy of follow.

I’d like to share some insight about this problem from a different genre. I’ve hanuted various photography forums and sites since the beginning of the internet. Many of those host photos and allow subscribers to rate them. The goal, of course, is to filter good stuff to the top and bad stuff to the bottom.

But every one of those sites quickly got gamed. Buddy groups evolved (cliques), with buddies up voting their friend’s images regardless of quality. People would rave about someone’s photo, with a footnote saying “please look at my image here…”. The other person would do that and reciprocate, again regardless of quality. The game got painfully obvious when poor quality photos would float to the top.

Then one site tried to fight back. It was easy for them to analyze their database to find the cliques - a small group who only voted for each other and no one else, and only gave high ratings. It was also easy to find the good reviewers. People who spread their votes at random across a wide number of people, and whose reviews were well spread across the range from OK to Excellent. (Nobody gives bad reviews, so a larger number of positive descriptions is needed - good, very good, excellent, outstanding, etc.)

The site then gave the ability to sort by two criteria. One was “all reviews” and one was “trusted reviews”. That worked fairly well. Bad photos would still show up in the “all reviews” sorting, but almost never in the “trusted reviews” sorting. Trusted reviewers were never revealed. They did not know they were trusted, but that was because the site admin did not want the un-trusted to know who they were.

Unfortunately, the site closed down. Facebook, Flickr, and a few other huge photo hosting sites came along and drove many out of business. But the point is that there is a solution to the rating problem. Maybe not a perfect one, but certainly an improvement worth considering.

@TW12 ELR is a FREE site, and to perfect this Ratings issue sounds like a lot of work. Also maybe this Photography site described above closed down, and the others were more popular (didn’t close down! ) because they allowed the clique activity …perhaps the ability to Game the System is a Membership Draw? …and I am in no way inferring that drives the policy here on ELR …more like the workload logistics. The ratings system helps, but the DIY Struggle is Real ![]()

I’m not sure if you were responding to me since you quoted someone else, but…

Personally I asked the question as a point of curiosity, not actually a request for action. And personally, while daath’s explanation is perfectly acceptable (and he does own the place, after all) I would still prefer that authors not be allowed to rate their own, but it’s not like I’m going to continue to campaign for it. It really wasn’t intended to broaden out to cliques skewing ratings as much as it was that to me the multitude of self-given five-star single rated recipes hinders my searching. That’s all it was, and with daath stating that it is not changing, that’s fine and dandy with me.

Oh no we’re on the same page. I don’t self rate. If you go to the ELR Home you will notice new recipes scrolling by like it’s a Stock Ticker! I think the average is 15 new recipes an hour …crazy. Some of the best recipes get posted out here in the Forum… Threads like What’s the Best Shake n Vape and What are you Mixing Today. So no criticism. Sore point for many, but workarounds exist, and we’re glad to help.

Maybe if some new people read this they may resist the temptation to pat themselves on the back.

Good point. It’s my nature not to be back-patty so I wouldn’t have anyway. Maybe that’s why it annoys me? But I also realize I’m far too new and unknown to be trying to dictate policy, so I hope no one actually interpreted it that way. I suppose moderating a vaping forum has me a bit sensitive to how the general community behaves.

I’ll say now so it’s clear; I appreciate this place and the service it provides to the DIY community.I appreciate the daily effort put in by the staff to keep the lights on, and for all the work that’s apparently already been done to make this a grand place for DIY mixing. It has many excellent features and so far a great community of users that help buttress the community spirit. I’ve been coming to the recipe side for a long time but only recently created an account, but even as an anonymous bystander I still got great value here.

I have a related question. So sometimes I want to look for a good rated recipe. When I click on ratings, some of the top results could be a 5 star recipe rated by one or two people. While a recipe that was given 4.5 stars but rated by 300 people could be lower in the list. Is there a way to sort recipes by amount of ratings it got? Meaning I’d see the ratings starting from the larger number and going down regardless of how many stars.

I say that is helpful because there is more credibility to a rating with 600 people than a recipe rated by 2 people. Even if the former got less stars.

If there isn’t a way to do that, this is my suggestion, I hope you folks would consider it. Even if you don’t, I would still like to thank you for this awesome website.

As far as I can see, it already works like that.

Did a quick test and a 4 star recipe with 14 rating was on top of the list, with a 5 star with 3 ratings on a 2nd place.

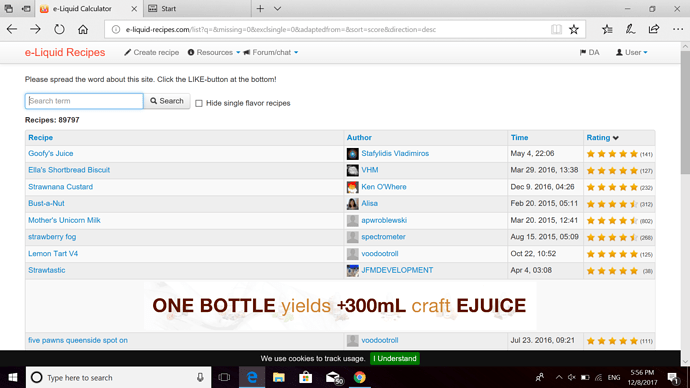

this what appears on my screen when I click sort by ratings.

apwroblewski’s Mother’s Unicorn Milk has 802 count of people rating it, but still, there are four recipes that come before it. One has 127 people rating it .

#8 only has 38 people rating it. While #12 has more than 300 people rating it.

I get the importance to sort by rate, but it would also help to sort by popularity and rate count.

I don’t see much value in number of raters - The rating sort we use is pretty good

However, I have now implemented an undocumented feature. Simply add ?sort=ratecount or change &sort=XXXX so that XXXX says ratecount, and you have it. This means that as soon as you click on, say page two, it won’t work, and you’ll have to change sort=XXXX again…

Awesome thanks